Accelerating materials language processing with large language models

-

Authors :

Jaewoong Choi, Byungju Lee

-

Journal :

Communications Materials

-

Vol :

5

-

Page :

13

-

Year :

2024

Abstract

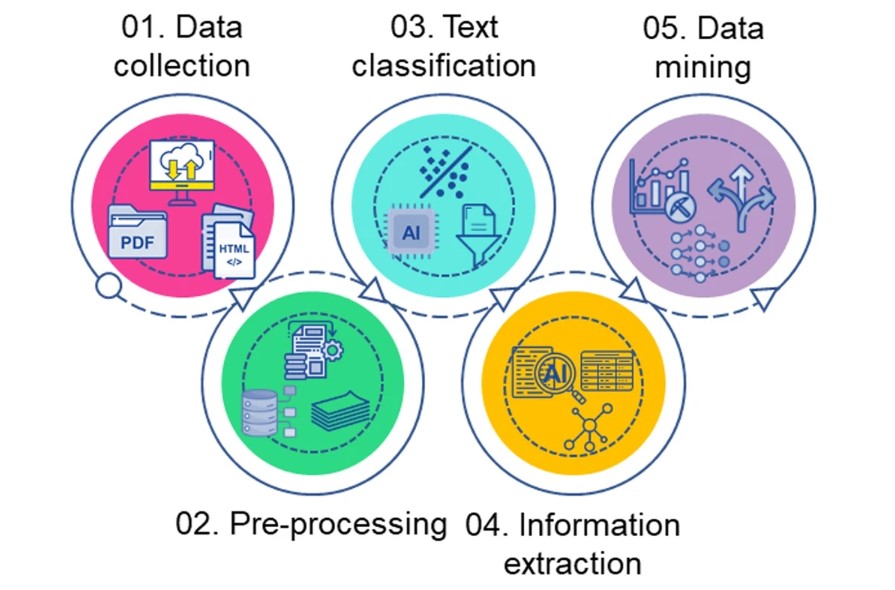

Materials language processing (MLP) can facilitate materials science research by automating the extraction of structured data from research papers. Despite the existence of deep learning models for MLP tasks, there are ongoing practical issues associated with complex model architectures, extensive fine-tuning, and substantial human-labelled datasets. Here, we introduce the use of large language models, such as generative pretrained transformer (GPT), to replace the complex architectures of prior MLP models with strategic designs of prompt engineering. We find that in-context learning of GPT models with few or zero-shots can provide high performance text classification, named entity recognition and extractive question answering with limited datasets, demonstrated for various classes of materials. These generative models can also help identify incorrect annotated data. Our GPT-based approach can assist material scientists in solving knowledge-intensive MLP tasks, even if they lack relevant expertise, by offering MLP guidelines applicable to any materials science domain. In addition, the outcomes of GPT models are expected to reduce the workload of researchers, such as manual labelling, by producing an initial labelling set and verifying human-annotations.